Recent Marine Heat Waves Caused by Undersea Volcanic Eruptions, Not Human CO2

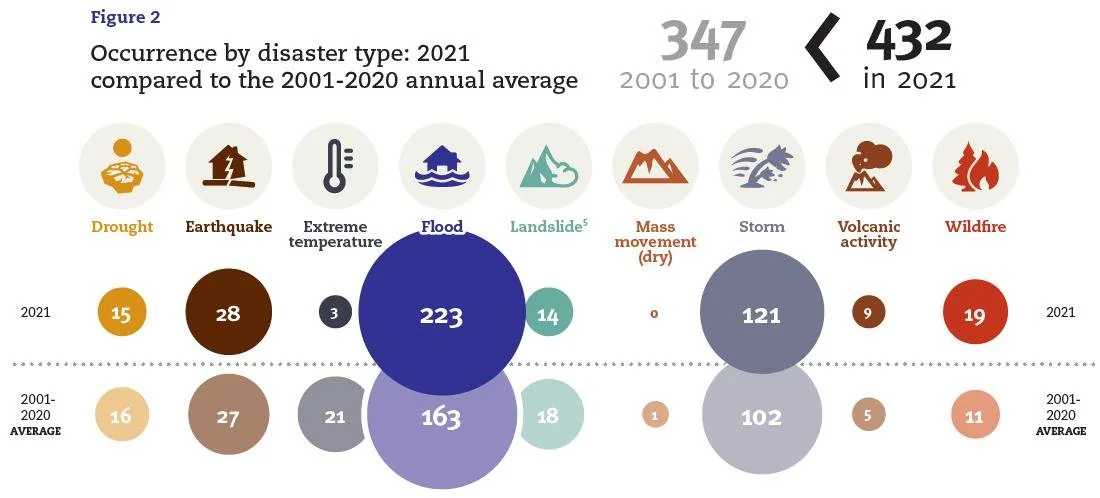

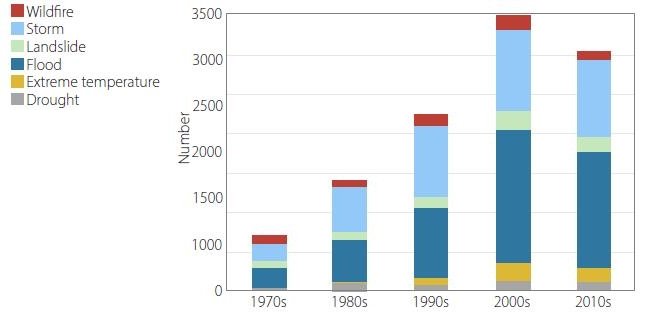

/In a previous post, I showed how submarine volcanic eruptions don’t contribute to global warming, despite the release of enormous amounts of explosive energy. But they do contribute to regional climate change in the oceans, such as marine heat waves and shrinkage of polar sea ice, explained a retired geologist in a recent lecture.

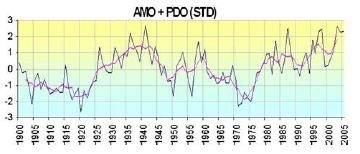

Wyss Yim, who holds positions at several universities in Hong Kong, says that undersea volcanic eruptions – rather than CO2 – are an important driver of regional climate variability. The release of geothermal heat from these eruptions can explain oceanic heat waves, polar sea-ice changes and stronger-than-normal cycles of ENSO (the El Niño – Southern Oscillation), which causes temperature fluctuations and other climatic effects in the Pacific.

Submarine eruptions can eject basaltic lava at temperatures as high as 1,200 degrees Celsius (2,200 degrees Fahrenheit), often from multiple vents over a large area. Even though the hot lava is quickly quenched by the surrounding seawater, the heat absorbed by the ocean can have local, regional impacts that last for years.

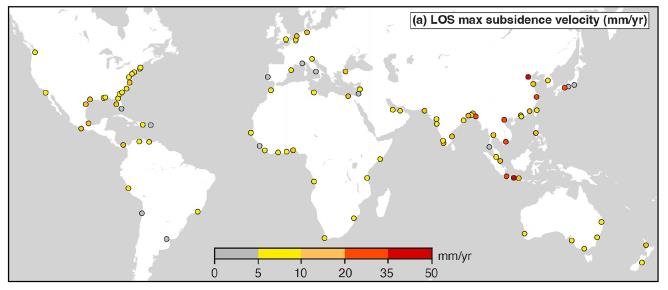

The Pacific Ocean in particular is a major source of active terrestrial and submarine volcanoes, especially around the Ring of Fire bounding the Pacific tectonic plate, as illustrated in the figure below. Yim has identified eight underwater eruptions in the Pacific from 2011 to 2022 that had long-lasting effects on the climate, six of which emanated from the Ring of Fire.

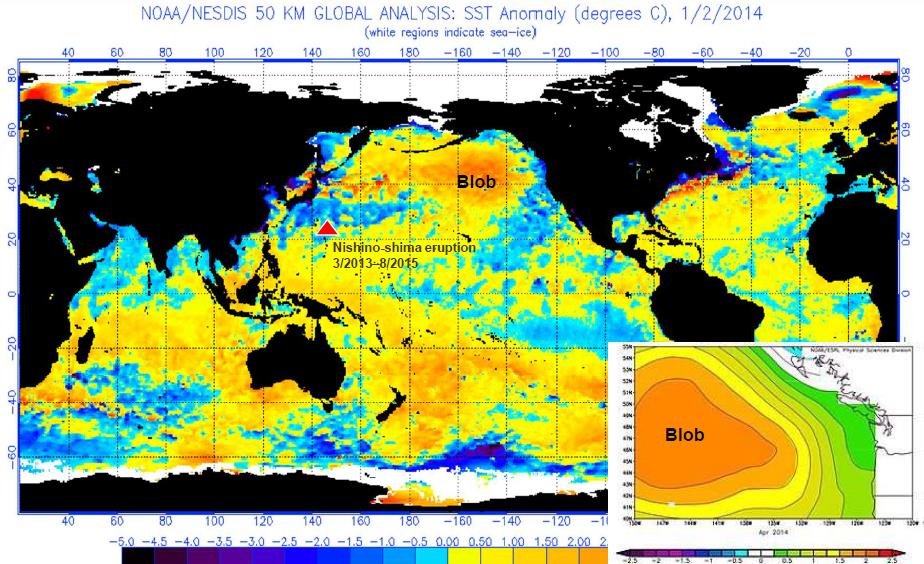

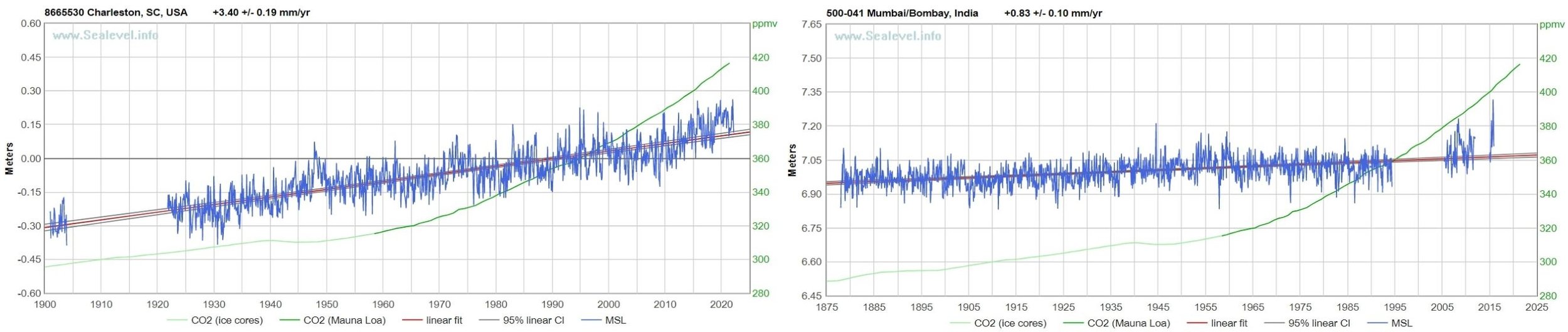

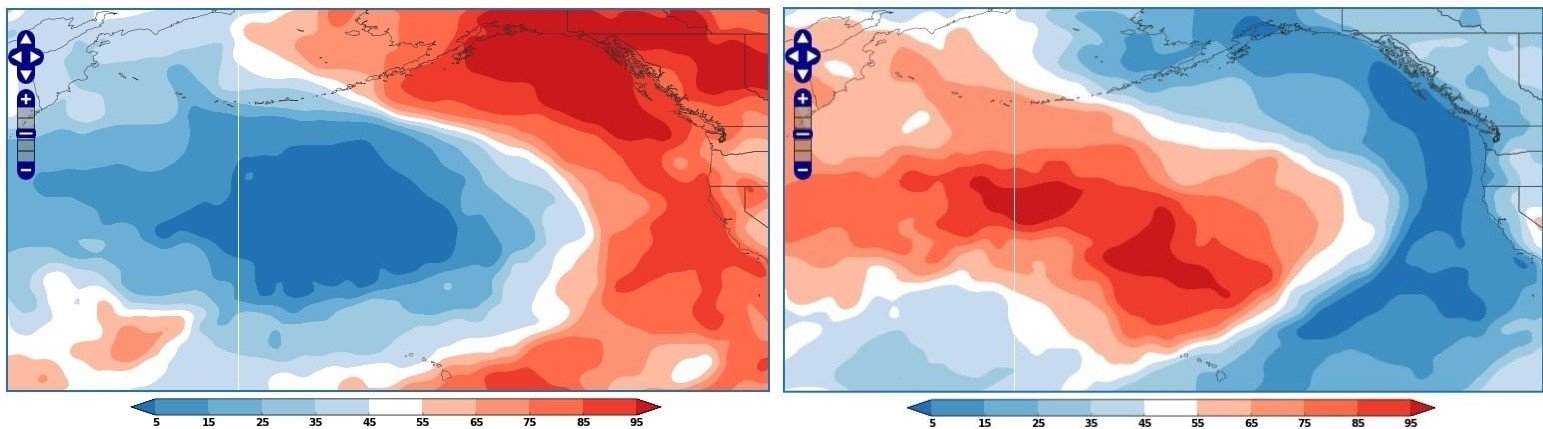

One of these eruptions was from the Nishino-shima volcano south of Tokyo, which underwent a massive blow-out, initially undersea, that persisted from March 2013 to August 2015. Yim says the event was the principal cause of the so-called North Pacific Blob, a massive pool of warm seawater that formed in the northeast Pacific from 2013 to 2015, extending all the way from Alaska to the Baja Peninsula in Mexico and up to 400 meters (1,300 feet) deep. Climate scientists at the time, however, attributed the Blob to global warming.

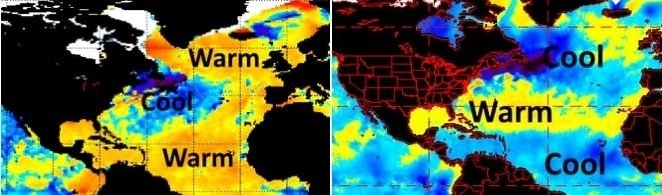

The Nishino-shima eruption, together with other submarine eruptions in the Pacific during 2014 and 2015, was a major factor in prolonging and strengthening the massive 2014-2017 El Niño. A map depicting sea surface temperatures in January 2014, at the onset of El Niño and almost a year after the emergence of the Blob, is shown in the next figure. At that time, surface temperatures across the Blob were about 2.5 degrees Celsius (4.5 degrees Fahrenheit) above normal.

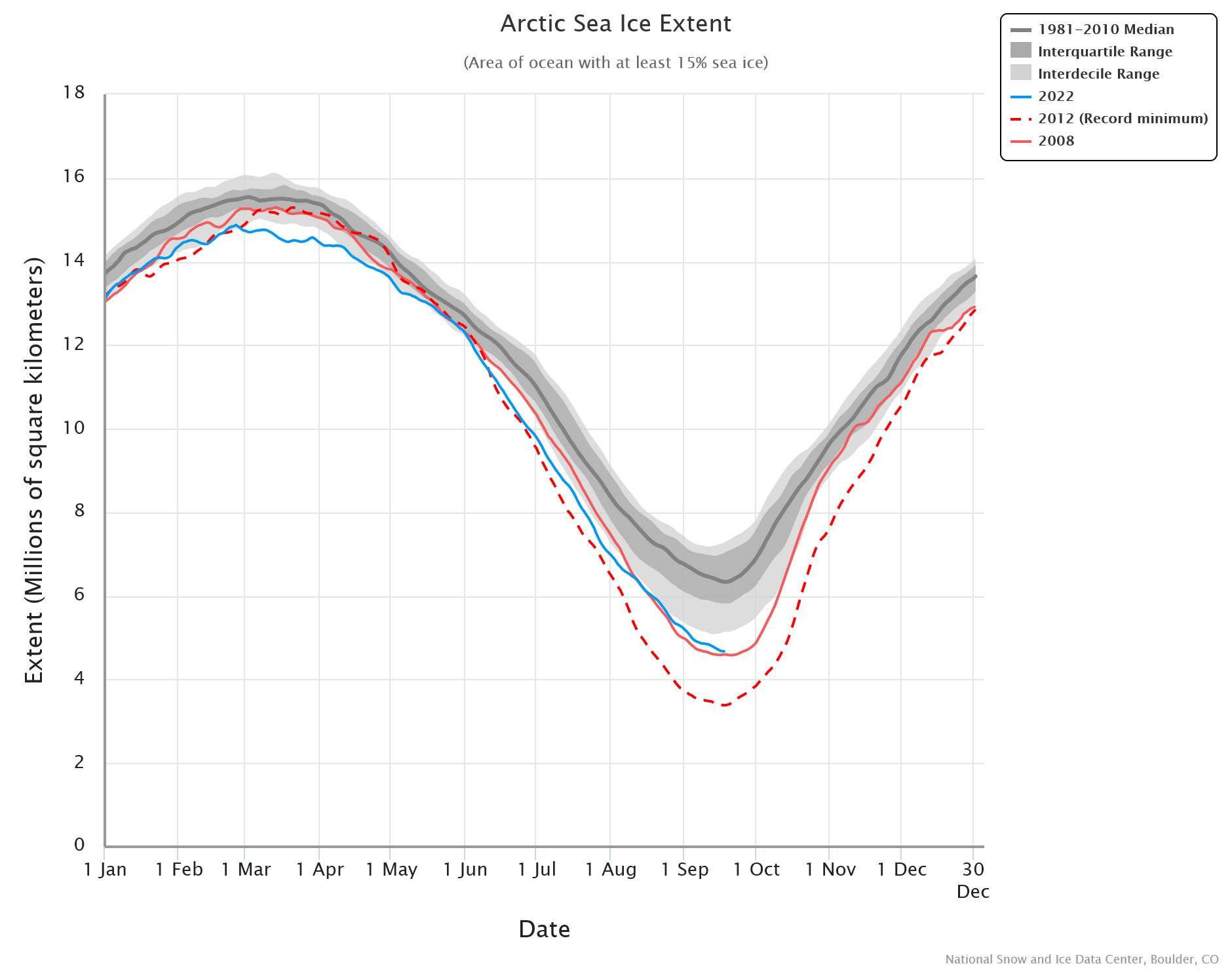

By mid-2014, the Blob covered an area approximately 1,600 km (1,000 miles) square. Its vast extent, states Yim, contributed to the gradual decline of Arctic sea ice between 2014 and 2016, especially in the vicinity of the Bering Strait. The Blob also led to two successive years without winter along the northeast Pacific coast.

Biodiversity in the region suffered too, with sustained toxic algal blooms. Yet none of this was caused by climate change.

The 2014-2017 El Niño was further exacerbated by the eruption from May to June 2015 of the Wolf volcano on the Galapagos Islands in the eastern Pacific. Although the Wolf volcano is on land, its lava flows entered the ocean. The figure below shows the location of the Wolf eruption, along with submarine eruptions of both the Axial Seamount close to the Blob and the Hunga volcano in Tonga in the South Pacific.

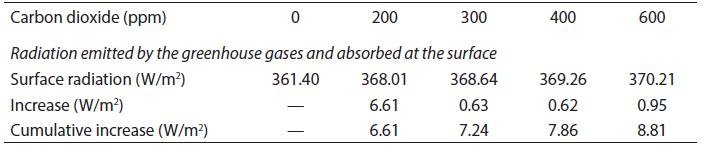

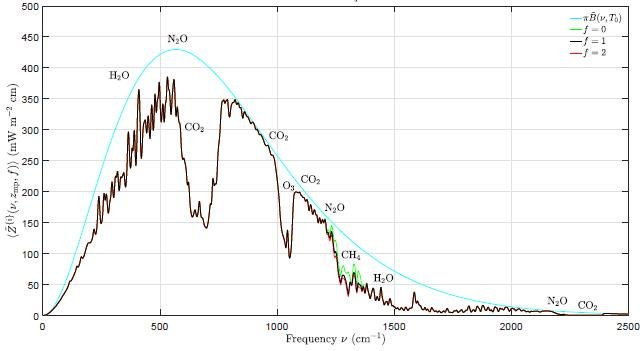

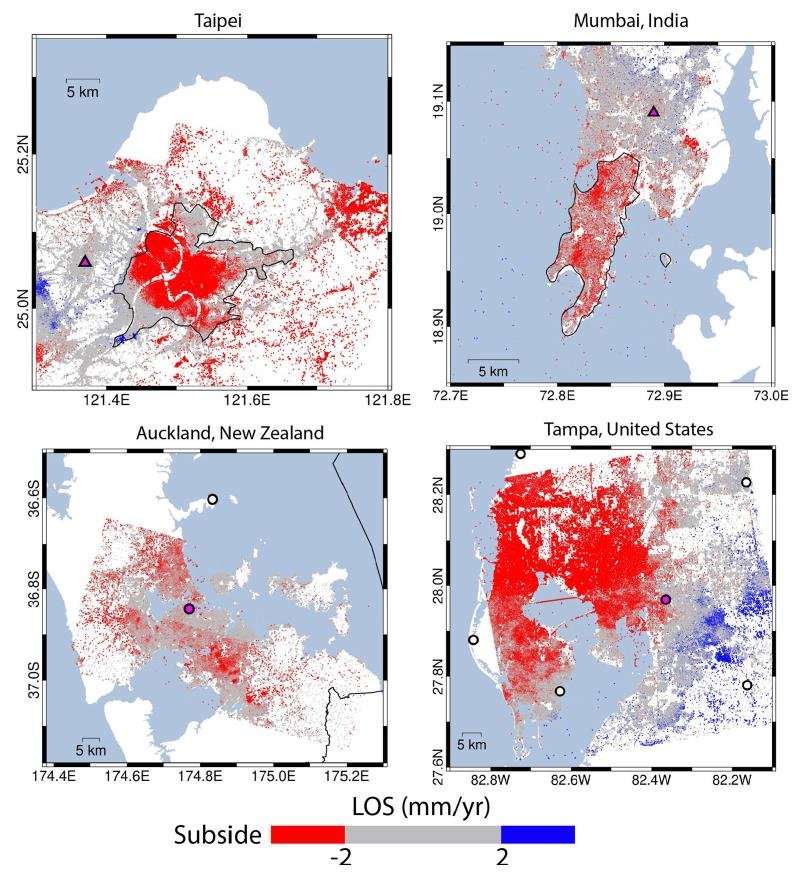

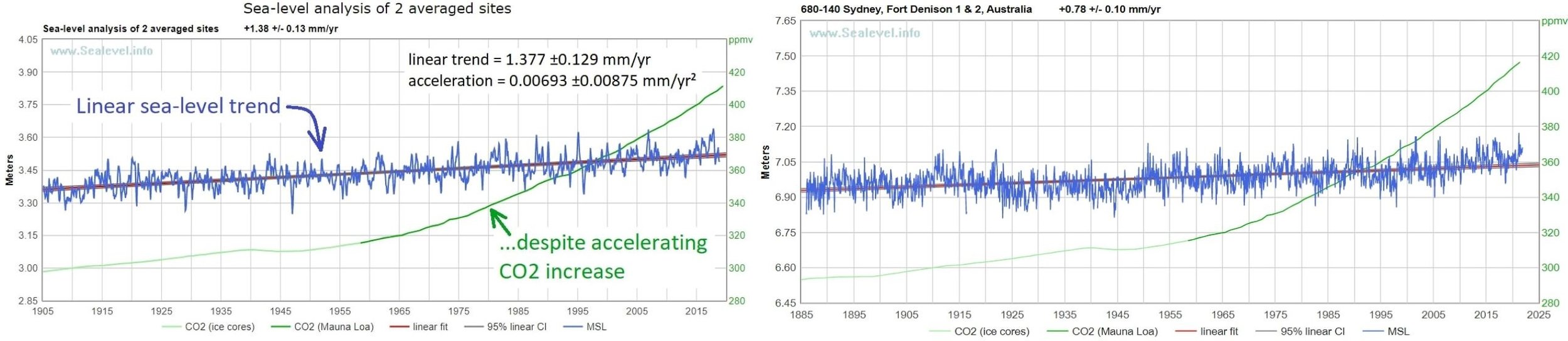

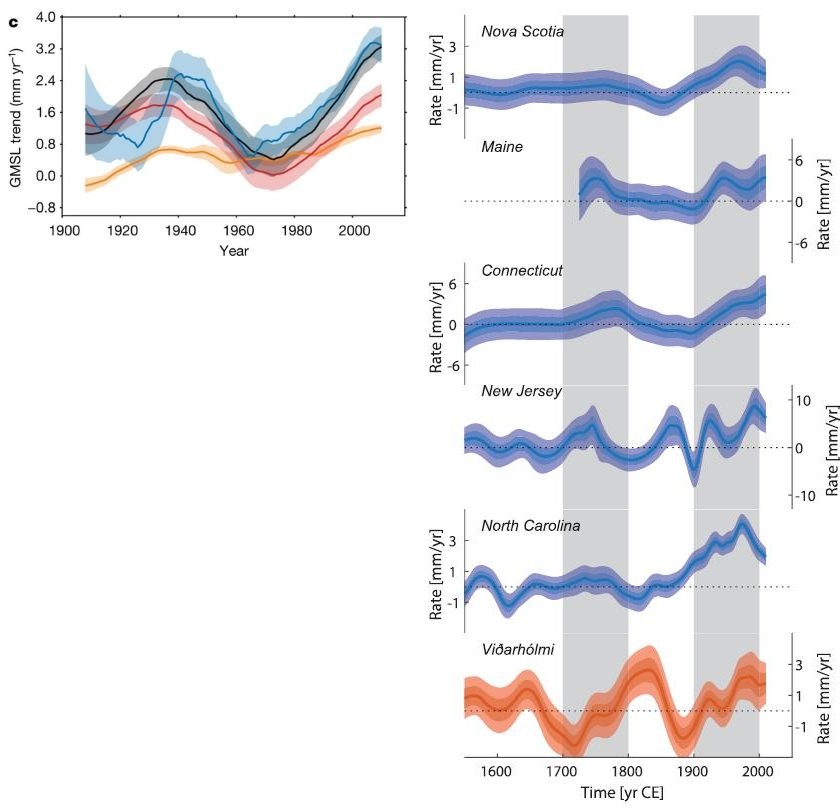

According to Yim, the most significant drivers of the global climate are changes in the earth’s orbit and the sun, followed by geothermal heat, and – only in third place – human-induced changes such as increased greenhouse gases. Geothermal heat from submarine volcanic eruptions causes not only marine heat waves and contraction of polar sea ice, but also local changes in ocean currents, sea levels and surface winds.

Detailed measurements of oceanic variables such as temperature, pressure, salinity and chemistry are made today by the worldwide network of 3,900 Argo profiling floats. The floats are battery-powered robotic buoys that patrol the oceans, sinking 1-2 km (0.6-1.2 miles) deep once every 10 days and then bobbing up to the surface, recording the properties of the water as they ascend. When the floats eventually reach the surface, the data is transmitted to a satellite.

Yim says his studies show that the role played by submarine volcanoes in governing the planet’s climate has been underrated. Eruptions of any of the several thousand active underwater volcanoes can have substantial regional effects on climate, as just discussed.

He suggests that the influence of volcanic eruptions on atmospheric and oceanic circulation should be included in climate models. The only volcanic effect in current models is the atmospheric cooling produced by eruption plumes.

Next: Climate Heresy: To Avoid Extinction We Need More, Not Less CO2