How Clouds Hold the Key to Global Warming

/One of the biggest weaknesses in computer climate models – the very models whose predictions underlie proposed political action on human CO2 emissions – is the representation of clouds and their response to global warming. The deficiencies in computer simulations of clouds are acknowledged even by climate modelers. Yet cloud behavior is key to whether future warming is a serious problem or not.

Uncertainty about clouds is why there’s such a wide range of future global temperatures predicted by computer models, once CO2 reaches twice its 1850 level: from a relatively mild 1.5 degrees Celsius (2.7 degrees Fahrenheit) to an alarming 4.5 degrees Celsius (8.1 degrees Fahrenheit). Current warming, according to NASA, is close to 1 degree Celsius (1.8 degrees Fahrenheit).

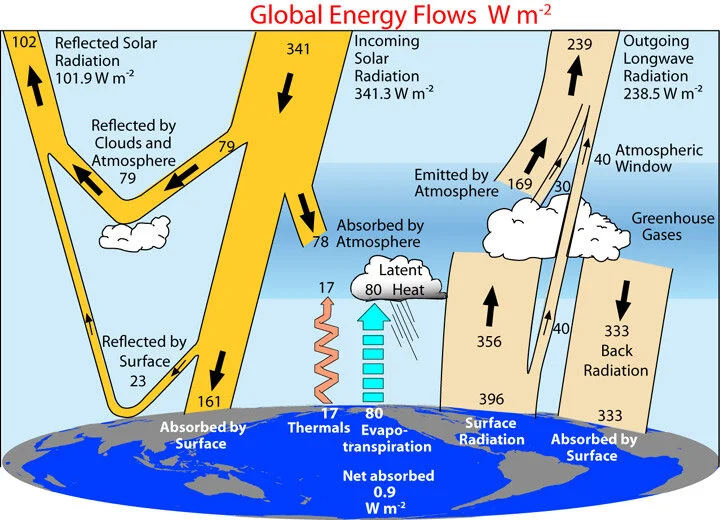

Clouds can both cool and warm the planet. Low-level clouds such as cumulus and stratus clouds are thick enough to reflect 30-60% of the sun’s radiation that strikes them back into space, so they act like a parasol and cool the earth’s surface. High-level clouds such as cirrus clouds, on the other hand, are thinner and allow most of the sun’s radiation to penetrate, but also act as a blanket preventing the escape of reradiated heat to space and thus warm the earth. Warming can result from either a reduction in low clouds, or an increase in high clouds, or both.

Our inability to model clouds satisfactorily is partly because we just don’t know much about their inner workings either during a cloud’s formation, or when it rains, or when a cloud is absorbing or radiating heat. So a lot of adjustable parameters are needed to describe them. It’s partly also because actual clouds are much smaller than the minimum grid scale in supercomputers, by as much as several hundred or even a thousand times. For that reason, clouds are represented in computer models by average values of size, altitude, number and geographic location.

Most climate models predict that low cloud cover will decrease as the planet heats up, but this is by no means certain and meaningful observational evidence for clouds is sparse. To remedy the shortcoming, a researcher at Columbia University’s Earth Institute has embarked on a project to study how low clouds respond to climate change, especially in the tropics which receive the most sunlight and where low clouds are extensive.

The three-year project will utilize NASA satellite data to investigate the response of puffy cumulus clouds and more layered stratocumulus clouds to both surface temperature and the stability of the lower atmosphere. These are the two main influences on low cloud formation. It’s only recent satellite technology that makes it possible to clearly distinguish the two types of cloud from each other and from higher clouds. The knowledge obtained will test how well computer climate models simulate present-day low cloud behavior, as well as help narrow the range of warming expected as CO2 continues to rise.

High clouds are controversial. Climate models predict that high clouds will get higher and become more numerous as the atmosphere warms, resulting in a greater blanket effect and even more warming. This is an example of expected positive climate feedback – feedback that amplifies global warming. Positive feedback is also the mechanism by which low cloud cover is expected to diminish with warming.

But there’s empirical satellite evidence, obtained by scientists from the University of Alabama and the University of Auckland in New Zealand, that cloud feedback for both low-level and high-level clouds is negative. The satellite data also support an earlier proposal by atmospheric climatologist Richard Lindzen that high-level clouds near the equator open up, like the iris of an eye, to release extra heat when the temperature rises – also a negative feedback effect.

If indeed cloud feedback is negative rather than positive, it’s possible that combined negative feedbacks in the climate system dominate the positive feedbacks from water vapor, which is the primary greenhouse gas, and from snow and ice. That would mean that the overall response of the climate to added CO2 in the atmosphere is to lessen, rather than magnify, the temperature increase from CO2 acting alone, the reverse of what climate models say.

The latest generation of computer models, known as CMIP6, predicts an even greater – and potentially deadly – range of future warming than earlier models. This is largely because the models find that low clouds would thin out, and many would not form at all, in a hotter world. The result would be even stronger positive cloud feedback and additional warming. However, as many of the models are unable to accurately simulate actual temperatures in recent decades, their predictions about clouds are suspect.

Next: Evidence Mounting for Global Cooling Ahead: Record Snowfalls, Less Greenland Ice Loss